A major incident will be traced back to an AI coding tool.

It's only a matter of when.

👋 Hi, I’m Thomas. Welcome to a new edition of Beyond Runtime, where I dive into the messy, fascinating world of distributed systems, debugging, AI, and system design. All through the lens of a CTO with 20+ years in the backend trenches.

QUOTE OF THE WEEK:

“AI assistance can cost more time than it saves.” - Matthew Hansen

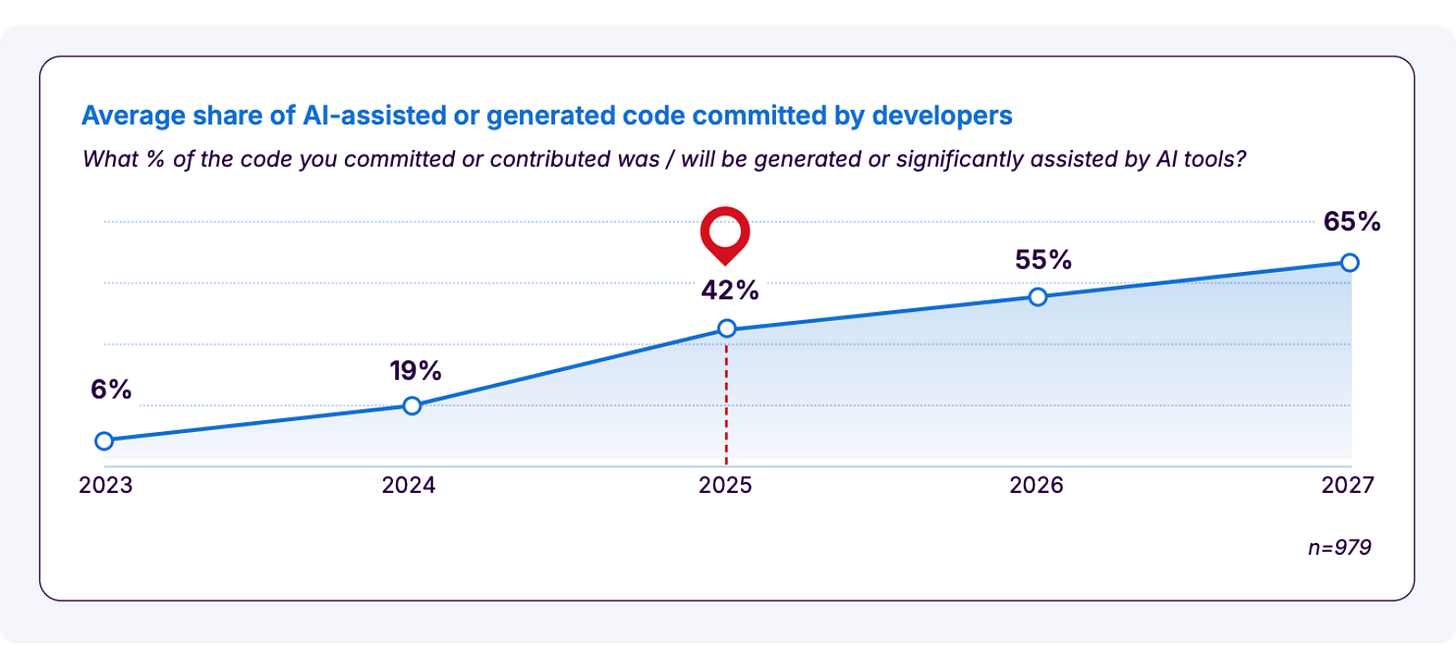

AI-assisted software development is accelerating faster than most people expected. Developers surveyed in the recent State of Code Developer Survey from Sonar report that 42% of their committed code is currently AI-generated or significantly AI-assisted. They expect that number to jump to 65% by 2027.

That’s a remarkable shift in how software gets built, happening in real time.

And yet, included in the same survey is a number that should give every engineering leader pause: 96% of developers don’t fully trust that AI-generated code is functionally correct.

Let that sink in for a second. Nearly every developer using these tools has reservations about what those tools are producing.

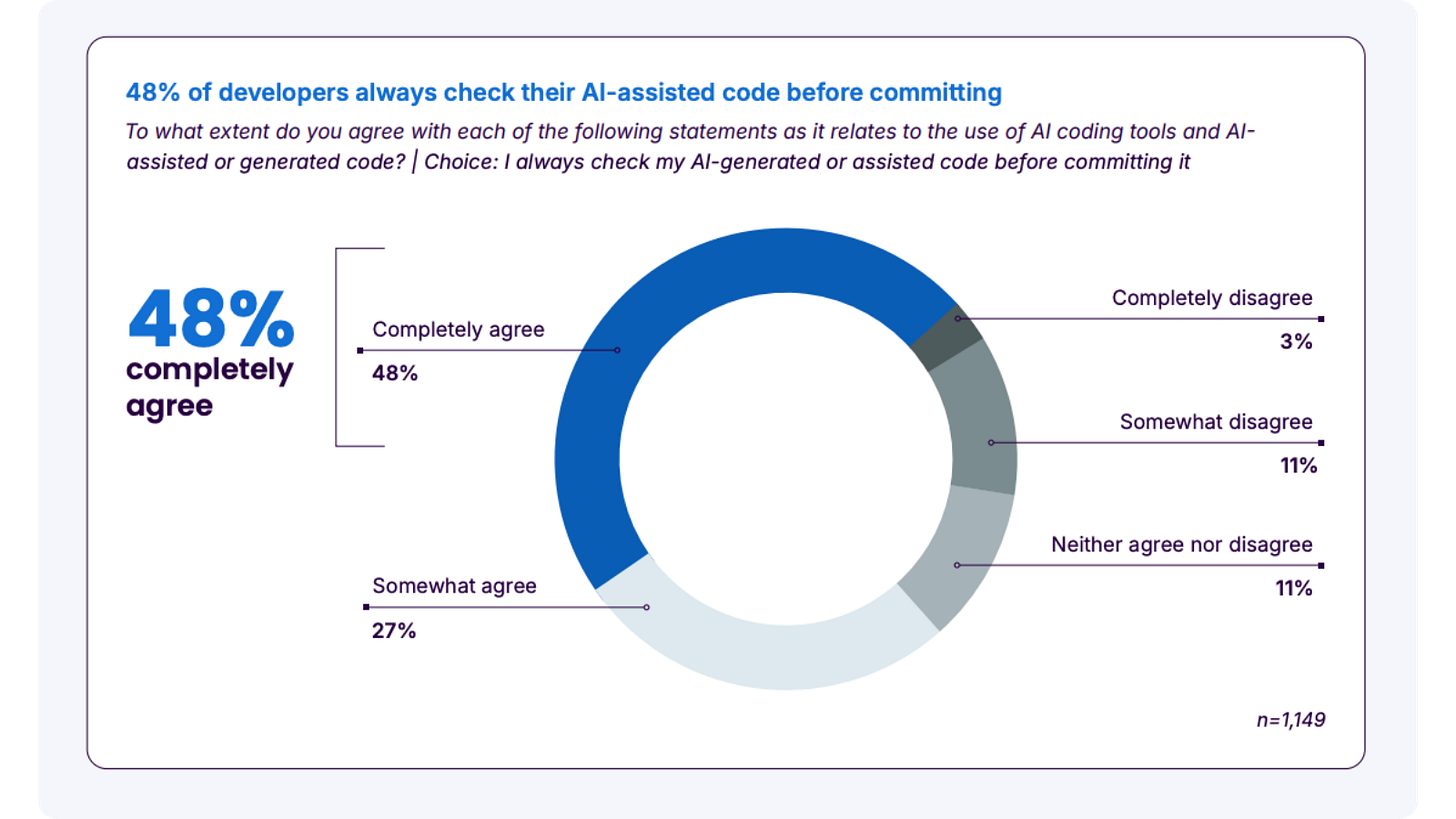

The more alarming number, though, isn’t the 96%. It’s the 48%: the share of developers who say they always check their AI-assisted code before committing it. In short, roughly half of developers are regularly shipping code they don’t fully trust and haven’t fully verified.

The rest of the data fills in the picture:

61% agree that AI often produces code that looks correct but isn’t reliable, and the same proportion agree it takes a lot of effort in prompting and fixing to actually get good code out of these tools.

57% of engineers worry that using AI puts sensitive company or customer data at risk.

35% of engineers are accessing AI tools through personal accounts. Meaning the code they’re generating for work is flowing through systems their employers have no visibility into, and no control over.

Taken together, this is a portrait of a technology being adopted fast, with the safety checks struggling to keep up.

We outsourced the easy part and kept the hard part

Here’s something that doesn’t get said often enough: writing code was never the hard part of software development. It’s the part that looks impressive in a demo, but experienced engineers know it’s the least cognitively demanding phase of the job.

The hard part is everything that comes before and around it: understanding the system you’re working in, knowing the constraints and trade-offs, holding the context of why a particular approach makes sense right now for this codebase, this team, this set of users. That’s the work that’s actually difficult. Writing is just the expression of it.

When you hand the writing to AI, you’re left with only the hard work. And here’s the catch: if you skipped the investigation and trade off discussions / thinking because the AI already handed you an answer, you’ve also lost the context you’d normally have built up by doing the writing yourself. (Matthew Hansen does a great job of explaining this in the article AI Makes the Easy Part Easier and the Hard Part Harder).

You arrive at the review stage with a block of code you didn’t write, in a system you don’t fully understand, trying to verify something you haven’t reasoned through.

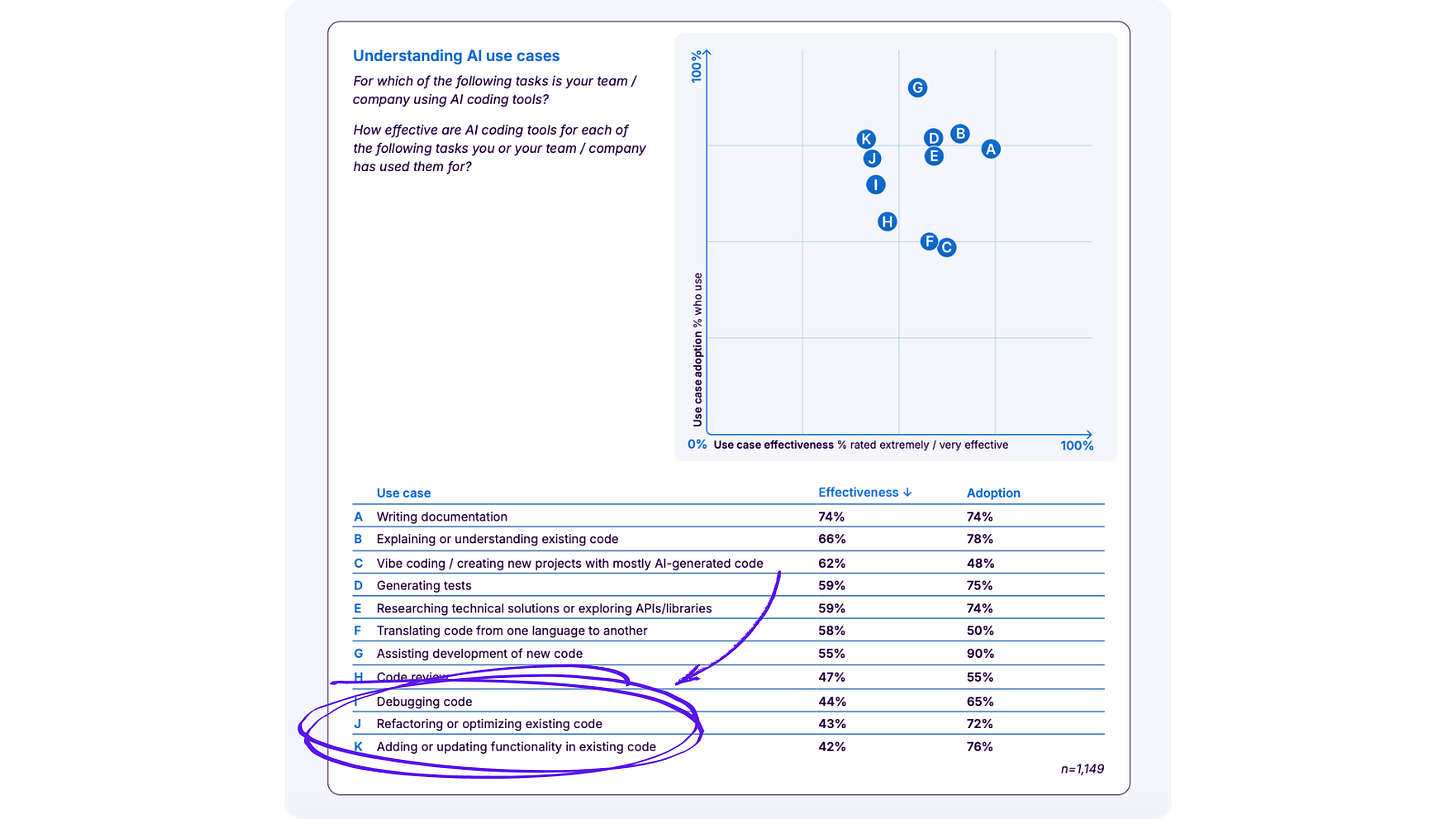

The Sonar survey data reflects this. Developers report that AI is most effective at writing documentation and explaining existing code (tasks where the output is legible and easy to check). It’s least effective at debugging, refactoring existing systems and adding functionality to live codebases: exactly the tasks that require deep contextual understanding.

The incident is coming

AI has already entered the software development lifecycle at almost every level: from prototypes (88% of teams) to mission-critical production services (58%). It’s not an experiment anymore.

Research by Aikido Security (2026 - State of AI in Security & Development) found that AI-generated code is responsible for around one in five security vulnerabilities discovered. Research by Apiiro inside Fortune 50 enterprises suggests that while AI coding assistants can be up to four times faster than humans working alone, they also ship code that carries ten times the risk.

Speed and safety are, at least for now, moving in opposite directions.

The conditions for a serious incident are being assembled quietly. Unverified code, personal accounts bypassing corporate controls, junior developers leaning heavily on AI tools they trust more than they should and a verification culture that hasn’t kept pace with the velocity of generation. At some point, those conditions will produce an outcome that makes the news.

The question for engineering leaders right now is whether their organisation will be the one that demonstrates it publicly or whether they’ll have the processes in place to catch the problem before it becomes a crisis.

You don’t need to understand everything, but you need to understand the right things

If we can’t fully understand AI-generated code, should we use it at all? In my opinion, that’s probably too strong a position. After all, we’ve been living with incomplete understanding of our systems for decades.

Modern CPUs run billions of operations per second through architectures most software developers couldn’t explain in detail. Operating systems manage memory, scheduling, and I/O in ways that are abstracted away from almost everyone building on top of them. We have mental models of how the layers beneath us work, but those models are simplified, sometimes dangerously so.

And yet software development has still managed to function and (when all the planets align) flourish.

The answer to incomplete understanding isn’t necessarily to achieve complete understanding. That’s not realistic, and for most purposes, it’s not necessary.

The answer is to be able to get exactly the information you need, when you need it, without friction, so that when something goes wrong, you can understand precisely what happened, in the specific part of the system that matters right now.

The gap in most engineering tooling isn’t awareness of the whole system at all times. It’s the ability to reconstruct the exact context of a specific problem (the sequence of events, the state of the system, the decisions that led there) quickly enough to matter.

As AI generates more of the code we rely on, that kind of targeted, on-demand visibility becomes less of a nice-to-have and more of a prerequisite for operating safely.

💜 This newsletter is sponsored by Multiplayer.app.

Full stack session recording. End-to-end visibility in a single click.

📚 Interesting Articles & Resources

96% Engineers Don’t Fully Trust AI Output, Yet Only 48% Verify It - Gregor Ojstersek

An in-depth review of the “State of Code - Developer Survey report” by Sonar, based on responses from 1,100+ developers.

Nobody knows how the whole system works - Lorin Hochstein

Hochstein reflects on how deep and layered modern software and technology stacks have become, to the point that no individual truly understands every level (from hardware circuits up through application logic). This article was inspired by recent debates on LinkedIn about the risks posed by developers who ship AI generated code without deep understanding.

How AI Is Changing What It Means to Be a 10x Engineer - Eric Roby

Rather than code output alone, Roby defines a 10x engineer as someone who multiplies team velocity through mentoring, infrastructure work, architectural clarity, and removal of bottlenecks. AI increases raw speed of code production, but true leverage comes from high-level thinking (architecture, cost optimization, systems design) that AI still struggles to automate.